Robots.txt control

Magento 2 allows fully customisable robots.txt files, in order to help control which pages search engine bots crawl. For large sites this can significantly help to control crawl budget.

How to edit robots.txt in Magento 2

Content > Design > Configuration > Edit top global store > Search engine robots

Although simple to edit, Magento 2 has been criticised for it’s rather unintuitive structure. Few would describe robots.txt control as being part of the design of a website.

If you have multiple stores (for example in an international site) make sure you change the robots file from the global site as this sits on the root and is the robots file crawlers will reference before crawling your site.

Sitewide changes

Changing the “Default Robots” option will change the robots settings for the entire site – this has 4 options:

- Index, follow – all pages are set to appear in search engines results and crawled (unless the specific path is blocked)

- Index, nofollow – all pages are set to appear in search engines results but not crawled

- Noindex, follow – no pages are set to appear in search engine results, but can be crawled

- Noindex, nofollow – no pages are set to appear in search engine results, or be crawled

NOTE – As of 1st September 2019 Google no longer supports robots.txt noindex directives, and also does not support robots.txt nofollow directives.. Noindex & nofollow directives should be included in the <head> HTML of pages (how to do so is discussed in the “Noindex, nofollow controls” section). Therefore this makes changing the settings above in Magento 2 redundant.

Path specific changes

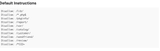

Path specific changes can be edited from the “Edit custom instruction of robots.txt file” editor box.

By default Magento 2 blocks the following paths:

However, with great power comes great responsibility; care should always be taken when editing robots files to ensure they do not block search engines from crawling unintended paths (or entire websites!).

Testing your robots.txt file

Before updating your robots.txt we recommend testing to see which URLs it will block using Google’s Robots.txt tester in Search Console.

If you’re making big changes, it may be useful to take things a step further and imitate a crawl of the site with your new robots.txt settings. This can be done using a custom robots.txt file in Screaming Frog – you can then see all the pages which you’ll be blocking! (COnfiguration > robots.txt > Custom > Enter your URL and edit the robots file)

Noindex, nofollow controls

As Google does not recognise noindex and nofollow directives via robots.txt, if you want to implement these directives, they must be added via the <head> section of a page’s HTML.

The best way to manage noindex, nofollow controls for specific pages, or groups of pages in Magento 2 is via an extension. For example:

FME noindex nofollow

Session IDs

On some Magento sites session IDs (SIDs) are automatically generated onto URLs when someone (or some bot) visits the site. This is normally used to ensure customers stay logged in when switching between stores.

This means that every time Googlebot, or any search engine crawls a site, it will see new URLs with different SID parameters. This can cause a huge amount of duplicate pages, since the same page has a different parameter URL for every page. These duplicate parameter pages can also be indexed if they are not properly handled with canonicals, and can also impact internal linking signals on your site.

SID URLs are also blocked by the default Magento 2 robots.txt, which can cause huge issues as Google will not crawl your site, as the URLs it encounters will contain an SID parameter and thus be blocked.

To remove SIDs Store > Configuration > General > Web > Session Validation Settings > Use SID on Storefront > No

Redirection

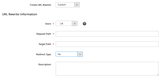

Redirects can easily be added to Magento 2 via Marketing > URL Rewrites > SEO & Search > URL Rewrites > Add URL Rewrites

Request path =the URL string you want to be redirected

Target path = the destination URL string for your redirect

These can be custom redirects (ie URL1 to URL2), or the redirection of a whole category, product type, or CMS page (ie /about/) and all their child pages allowing for flexibility to redirect and control redirection with ease.

The redirect type can also be configured depending on why you’re redirecting pages:

- 301 – permanent redirects

- 302 – temporary redirects

Global redirects

Global redirects can only be added server-side; therefore you will likely need a developer to implement any global redirects on the site.

Redirect settings

If using Amasty, some additional redirection settings can be set up, including 301 redirects to search pages when a page 404s. However, this can be detrimental to SEO, since it can cause low value search pages to be crawled and indexed if not properly controlled, in addition to making it difficult to spot pages that 404.

These settings can be changed via (Content > Amasty SEO Toolkit > General Settings > Enable Redirect from 404 to Search Results > No).

Sitemap Control

XML sitemaps can be created and sitemap settings (update frequency and priority) configured out of the box in Magento 2. However, if you want to exclude certain URLs from the sitemap, (for example canonicalised or noindex URLs), you will need a third party extension. Extensions will allow the capability to remove out of stock products, specific URLs or categories from the sitemap which isn’t available by default in Magento 2.

You can also generate sitemaps for different storefronts which can be more useful to inform Google about which pages it should crawl on your stores and to gather index information once submitted in Google Search Console.

Sitemaps update automatically at a particular set timeframe that can be set, so if your site has changed significantly you’ll want to re-generate your sitemaps to ensure it doesn’t contain lots of errors.

XML Sitemap Generation

XML sitemaps can be generated in Magento 2 via Marketing > SEO & Search > Site Map – this allows you to create sitemaps with the path and filename that you want them to have.

XML Sitemap Configuration

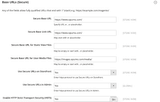

XML sitemaps can be configured via Stores > Settings > Configuration > Catalog > XML Sitemap. You can set update frequency and priority for category pages, products, and CMS pages.

You can also ensure that the sitemap is referenced in the site’s robots.txt file to make it easy for Google to identify and crawl using the following settings:

Some XML sitemaps extension our clients work with include:

HTML sitemaps

HTML sitemaps could be created manually using a CMS page in Magento 2, however, this would be very long winded if you have anything other than the smallest ecommerce site.

Many extensions that offer XML sitemaps also offer HTML sitemaps to help improve the discoverability of pages for search engines and end-users.